Contents

Liam Foot Hash

An Alternative to the great, but ageing, QuickSFV

I wanted to make a program that:

- Is fast.

- Is genuinely simple to use, and easy to read.

- Performs useful integrity checks.

- Consumes little memory.

For the most useful integrity checks, I wanted a cryptographic hash function, as it would be more sensitive to changes in data versus a non-cryptographic function.

For this, I chose BLAKE3. Typically, cryptographic functions are slow, but not this one - BLAKE3 is very fast. This is counter-productive for password hashing e.t.c., but perfect for file verification. See benchmarks further down the page.

This is the fastest cryptographic hash function available and is great to work with. In my testing, where I have replaced single bytes at random places in a file, BLAKE3 has always validated correctly.

I am using Blake3.NET for the hashing function.

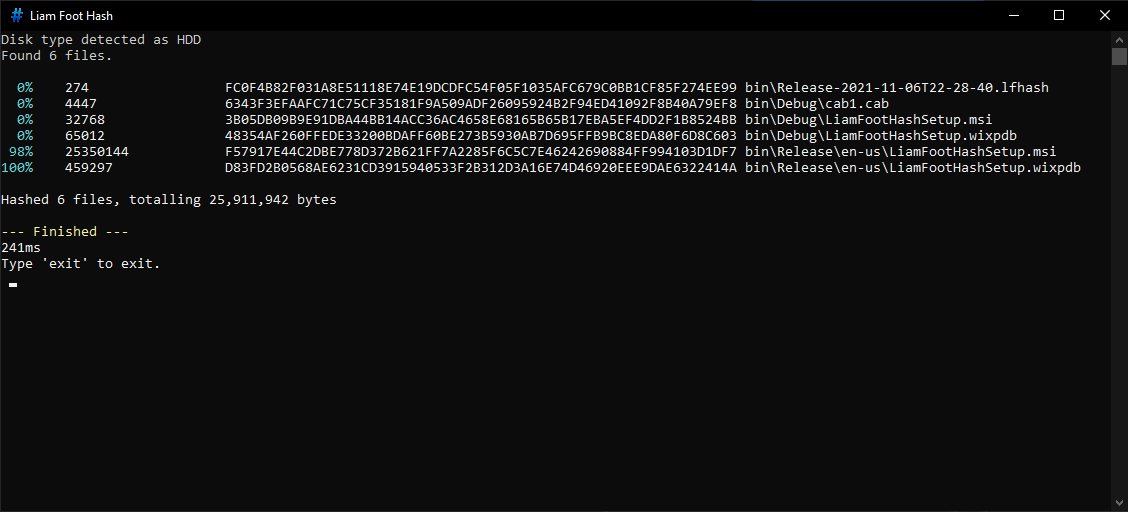

The program.

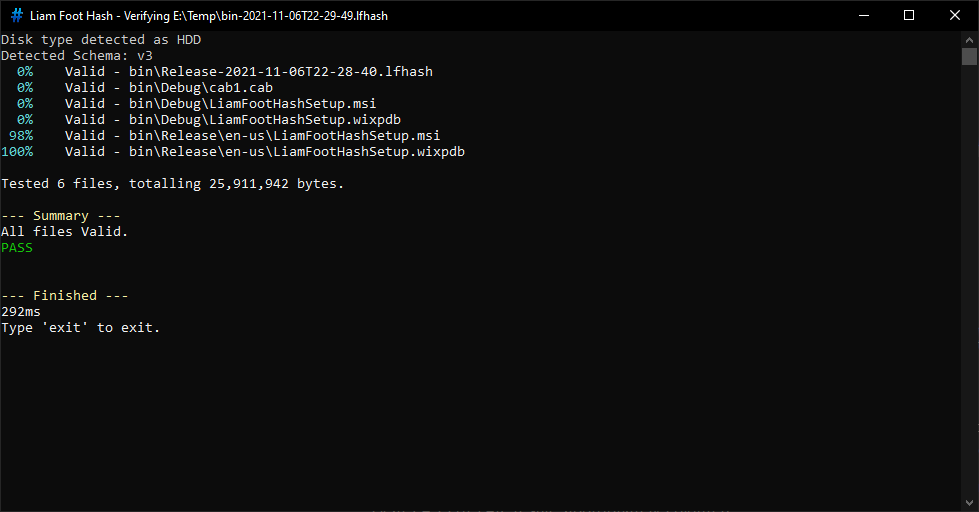

Successful Verification.

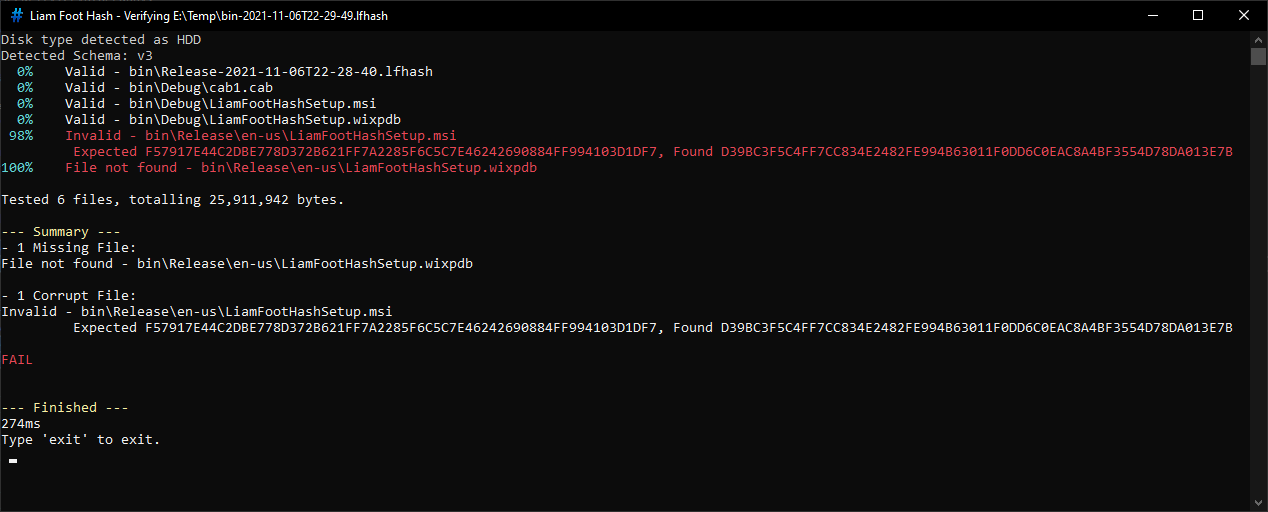

Failed Verification.

Progress is reported as each file is processed, and is based on the percentage of bytes processed. The above example is just a small testing directory, but for larger directories, I find this to be generally helpful. On the final line, the runtime is shown. This timer starts on the very first line of the program.

Red and green are used to denote failure and success. At the end, there is a summary section to recap any failed or missing files, and to show a clear PASS or FAIL result, appropriately coloured.

When verifying existing checksums, the checksum file is shown in the window title. This is helpful when you want to start multiple verifications and check back on the results later, so that you know which results are for which checksum file.

Before the program starts, it runs disk type detection, to categorise the storage media being used as either NVMe SSD, SATA SSD, or HDD. Depending on what is detected, the program is optimized for the best throughput.

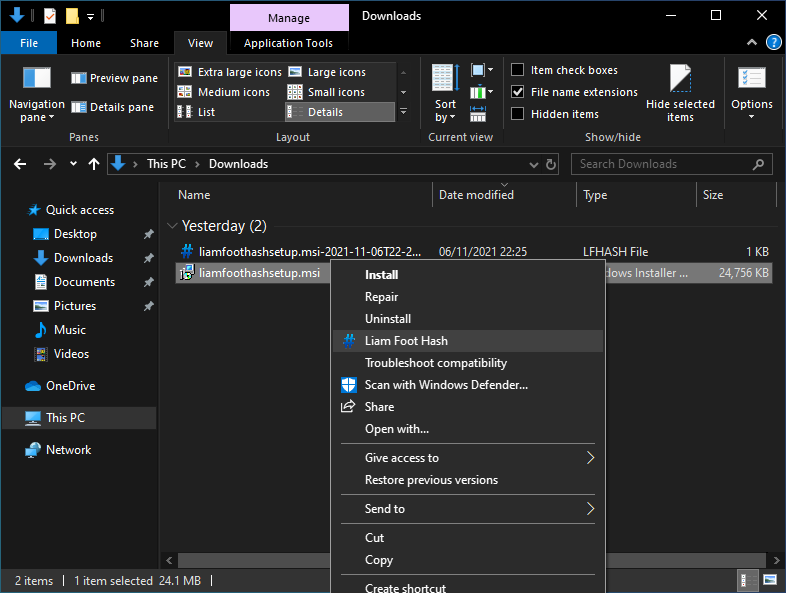

Liam Foot Hash in the File Explorer Context Menu.

Fear the command-line? Fear not, as usage is easy - right click a file, or a directory, and you will see an entry for Liam Foot Hash with a nice icon. Click this, and the selected file, or folder (recursively hashing all files within), will be hashed.

As the program runs, it writes the results to a file next to whichever file or directory you selected. The name of this file is "{selectedFolder}-{Timestamp}.lfhash". This name is chosen automatically, which means you can save time by not having to provide a file name, and you can keep running checksums without worrying about name collisions for the result file. Other hashing programs require manual naming of the checksum file, and I find this becomes tedious.

To verify the checksums, you can right-click as above, or just double-click the file. You can rename the checksum file, but you must keep the .lfhash extension.

You can store these checksums alongside your backups for convenient verification.

As a note on the right-click entry, remember that one program instance will open for each item you have selected. For this reason, if you want to hash many files, you should hash their containing folder instead.

Command-Line Usage

For command-line usage, open a command prompt in the installation directory next to LiamFootHash.exe. The default installation directory is:

%ProgramFiles(x86)%\LiamFootHash

Then run a command like the following:

LiamFootHash.exe "C:\Media"

Or

LiamFootHash.exe "C:\Media-2021-11-01T14-34-34.lfhash"

The program will create new checksums, or verify existing checksums, as appropriate.

As of version 1.4.2.0, additional arguments are supported. Note that all additional arguments must precede the path argument:

- --exit

- Causes the program to automatically exit on completion.

- --d

- Bypasses disk type detection and sets the disk type. Valid values are:

- sata_ssd

- nvme

- hdd

- If your disk is mis-detected, you may see a performance benefit by supplying the correct disk type. Mis-detection can occur through disk enclosures or when accessing virtualized disks.

- Bypasses disk type detection and sets the disk type. Valid values are:

Here is an example command with all arguments:

LiamFootHash.exe --d hdd --exit "C:\Media"

Justification

As part of my backup strategy, I ensure that all files are stored alongside checksums.

My backup strategy has several steps to it, but some of these involve raw files on a volume. I am primarily a Windows user, and so I almost exclusively use NTFS volumes, which are not "resilient". If I could use a more resilient file system, I would still create my own checksums, but it is even more important when using a non-resilient file system.

Why use checksums?

- Detect bit rot, where a storage volume "decays".

- Detect transfer errors between storage volumes as part of the backup process.

- Detect files that are missing from backups.

- Detect memory corruption.

In the past, I have found that some corrupt files have made it into my backups. It was at this point that I decided to start using QuickSFV to checksum my data. For files that do not change often, or do not change at all (e.g. DVD rips), where your backup software shows a change in the file; you can use the checksums to verify which file is correct. Video files in particular are quite resilient - if a corruption has occurred, the video will often play, and so you won't know whether the file is intact unless you have checksums to run against it. Without verifying the file, small corruptions could pass through undetected.

In the past, I have used Microsoft SyncToy, a backup tool which is no longer maintained. Unfortunately, it can miss files from backups, and I did not realise this until I started using QuickSFV some time ago. I have since replaced SyncToy with FreeFileSync, but I still use checksums as I believe in multi-layered protection. Always have a fallback.

Recently, checksums enabled me to identify a memory corruption on my home server. I was in the process of making backups, and as part of that I was generating checksums, and I found that these checksums were faulting. My initial reaction was to point at the Seagate hard disk being used (which is six or seven years old), but after noticing the sporadic nature of these failures, it did not take long for me to suspect it was a fault with the system's memory modules. Corsair requested tests on each individual module and those tests showed that one module was quite unstable. Corsair has agreed to replace the memory kit. It looks like I'll be getting a 32 GB 1600MHz CAS 16 kit to replace my 16 GB 1866MHz CAS 16 kit (DDR3), but it also looks like there's a decent wait. I'll be happy if that's the replacement I get!

Checksums can be quite helpful, and I feel they are a critical layer of the backup strategy.

Platform Support

This is a .NET 8 console application, and is built for x86 Windows. For full installation, it also requires .NET Framework 4.7.2. The following Operating Systems are supported:

- Windows 10, v1607+

- Windows 11, v22H2+

- Windows Server 2016 and newer

The following operating systems may be compatible, as .NET 8 and .NET Framework 4.7.2 support them, but I am unsure if my program does:

- Windows Server 2012

LiamFootHash began with .NET 5 which supports Windows 7 SP1 with Extended Security Updates, and Windows 8.1. If you want to use LiamFootHash on those platforms, download an older version. It has not been tested below Windows 10.

Issues with QuickSFV and Other Alternatives

I like QuickSFV, but it has not been updated since 2008. This is fine, and whilst sometimes substantially slower than my app, it pretty much functions, except only against a limited character set. I have files in my backups containing the greek letter tau, cryllic, and other characters, which QuickSFV refuses to process. These files therefore were excluded from my checksums, which I was not happy about. When QuickSFV encounters an unsupported file, it also pauses on that file, and no further progress is made until interacting with it - so you have to keep checking on it. Sometimes I would start checksums, come back later, and find it had made no progress for the last hour.

QuickSFV is great, but its age started to show here, so I had to replace it. I did look for other software but I did not find anything built as a simple replacement for QuickSFV.

OpenHashTab

The closest alternative I have found is OpenHashTab, which does not seem to be designed as a QuickSFV replacement, and that's fine. OpenHashTab supports many different checksum formats, perhaps some you would even prefer, and that's cool. Initially I chose this as my replacement. OpenHashTab seems great for being able to select a file, and get back an assortment of checksums.

I don't want to rag on at OpenHashTab here, but I found it to be a bit cumbersome to use. For instance, when I tried to checksum a 370GB folder on my 1TB 970 Evo Plus, it just froze the Explorer window for several minutes. During this time I watched the memory usage of that Exporer window climb and climb at a few megabytes per second, until reaching around 1.5 GB. At some point, OpenHashTab launches in a hanged state. As the Explorer window is hanged, Explorer as a whole will crash if you try to interact with that window too much. Memory drops over time back to around 500MB at completion. QuickSFV shows similar behaviour, only with less memory usage, and hangs for much less time.

On one occasion, I got OpenHashTab to hash this folder in just under four minutes, but most attempts are closer to nine minutes due to the hanging.

I also find that when I save results from OpenHashTab into a file, I can't get the program to verify the hashes - it just hashes the hash file, rather than reading from it to verify. Some hash files do work, and some don't - I'm unsure why. Additionally, there is some information in the UI that I'm unsure how to make sense of, and I can't find a guide anywhere. I was going to test OpenHashTab from the command line to avoid issues with the Explorer integration, but I can't find any way of doing this.

It seems that OpenHashTab runs within Explorer, as QuickSFV does, and I've also had similar issues with QuickSFV crashing Explorer mid-way through hashing large folders. I don't know what mechanism they are using to integrate with Explorer, but my program will never crash or hang Explorer, as it runs separately.

When I hash this same folder using Liam Foot Hash, it peaks at 61.6 MB of memory usage, is responsive immediately, you see the results and progress in real-time, and nothing hangs.

My program is intended to fill in for QuickSFV in the modern age, and OpenHashTab did not have this design goal, and that's fine. It's still cool software and I've used it, along with QuickSFV, to help test that my app provides the expected results.

Benchmarks

All benchmarks are using an 8700k @4.9GHz all cores, no AVX offset, and 32 GB 3200MHz CAS 14 memory in dual channel.

When measuring memory usage for OpenHashTab and QuickSFV, I record the memory usage of the Explorer process they run under, since they seem to run as a child of Explorer and I can't see direct memory information for these programs. OpenHashTab has only the BLAKE3 algorithm selected. Note also that OpenHashTab does not automatically write results to a file.

Before each individual test, Microsoft's RamMap tool was used to clear the Standby List, which removes the processed files from Windows' cache.

The 970 Evo Plus is a strong NVMe SSD. The N300 is a NAS-rated HDD. As mentioned before, Liam Foot Hash optimizes for the type of storage it is running on. I have found that the algorithm produces great results for 850 EVO, 860 EVO, 970 Evo Plus, and N300. There are a lot of different storage devices out there, so if you decide to run your own benchmarks and think the algorithm is not working for you, please let me know.

300GB folder, 12k files, 970 Evo Plus 1TB, XTS-AES 256 Bitlocker

| Peak Memory Usage | Runtime | |

| Liam Foot Hash | 25 MB | 1 minute, 43 seconds |

| QuickSFV | 110 MB | Too tedious to benchmark. Around 20 minutes in, it complains about a file with unsupported characters, and pauses there. The progress bar was around 15% complete. |

| OpenHashTab | 1164 MB | 1 minute, 52 seconds (with around 3 second hang), and 3 minutes, 18 seconds (with longer hang) |

72 GB folder, 72k files, 970 Evo Plus 1TB, XTS-AES 256 Bitlocker

| Peak Memory Usage | Runtime | |

| Liam Foot Hash | 74.5 MB | 30s |

| QuickSFV | 118 MB | 15 minutes, 48 seconds |

| OpenHashTab | 1.3 GB | 6 minutes, 57 seconds |

72 GB folder, 72k files, Toshiba N300 4TB, XTS-AES 256 Bitlocker (same files as above)

| Peak Memory Usage | Runtime | |

| Liam Foot Hash | 46.4 MB | 10 minutes, 45 seconds |

| QuickSFV | 120 MB | 25 minutes, 6 seconds |

| OpenHashTab | 1.4 GB | 24 minutes, 4 seconds |

10GB folder, 1200 files, Toshiba N300 4TB, XTS-AES 256 Bitlocker

| Peak Memory Usage | Runtime | |

| Liam Foot Hash | 14.9 MB | 1 minute, 6 seconds |

| QuickSFV | 88.0 MB | 1 minute, 57 seconds |

| OpenHashTab | 1124 MB | 1 minute, 23 seconds |

Version History

- Initial Launch Version

- When attempting to hash a file or directory for which the user has insufficient permissions, or where the save file cannot be created due to insufficient permissions, the program no longer crashes, and informs the user that they can run Liam Foot Hash as an administrator to complete the work.

- You can now hash volumes. The save file exists within the volume root. A right-click entry has been added. You can also use the command-line e.g. "LiamFootHash.exe E:\". This creates the hash file "E:\Volume-{timestamp}.lfhash".

- At the end of the program, the save file name is now shown.

- Resolved an issue where the uninstaller could fail, due to failing to clean up registry keys.

- Resolved an issue with disk type detection.

- The program will now check for updates on each launch, and offer to install them. This will ensure that people are kept up to date with new features and fixes. Updates can be skipped.

- The update check is done in parallel so that the program is not delayed.

- Resolved an issue where files hashed directly inside a volume root were stored with the drive letter, so the .lfhash file contained their full file path. This means that the files could not be verified if moved; or if copied, the old, still-existing version would be verified rather than the new one.

- Disk type detection is now functional for disks containing multiple data volumes.

- Resolved NaN progress reported when hashing empty files.

- When a single file was hashed, it could not be verified due to an additional character that was present in the checksum file. This was introduced with the previous update and is now resolved.

- Added support for --exit and --d command-line switches. These auto-exit on program completion, and specify disk types. See the "Command-Line Usage" section for details.

- Upgraded from .NET 6 to .NET 8, as .NET 6 will reach end-of-life tomorrow.

- The program now uses ReadyToRun Compilation so it may launch slightly faster.

Known Issues

- None.

Download

If you use my app, let me know what you think, and please let me know if you encounter any bugs. I have been testing this as part of my own backup strategy, and at least in my usage, I have ironed out all the bugs that I encountered.

You can use the contact form linked in the navbar to report issues or feedback.